MLASS

Multi Latent Autoregressive Source Separation

This project extended the LASS method to support more than two sources while maintaining feasible memory complexity.

LASS: The Original Work

LASS separates mixed sources without needing additional gradient-based optimization. It uses a VQ-VAE to embed signals into a discretized latent space and autoregressive priors to sample original sources from a joint posterior.

MLASS: My Extension

I proposed two methods to decouple memory complexity from the number of sources ($n$), significantly optimizing the original $O(k^n)$ complexity.

-

01

Belief Propagation (BP):

Reduces memory complexity to $O((n-1)k^3)$, making multi-source separation viable on standard hardware.

-

02

Probabilistic Extractor (PE):

An alternative sampling approach for source separation in latent space.

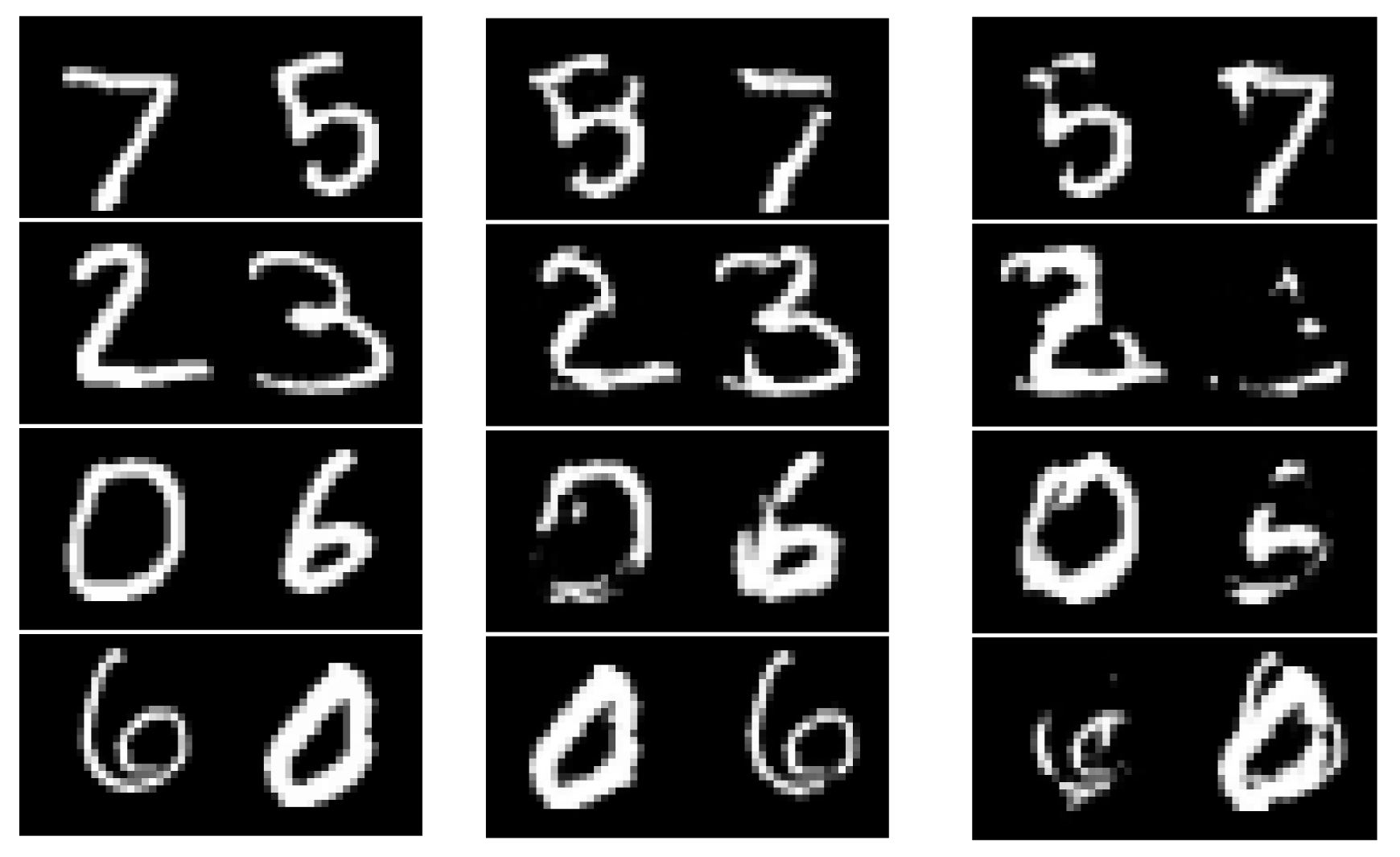

2 Sources: Original - BP - PE

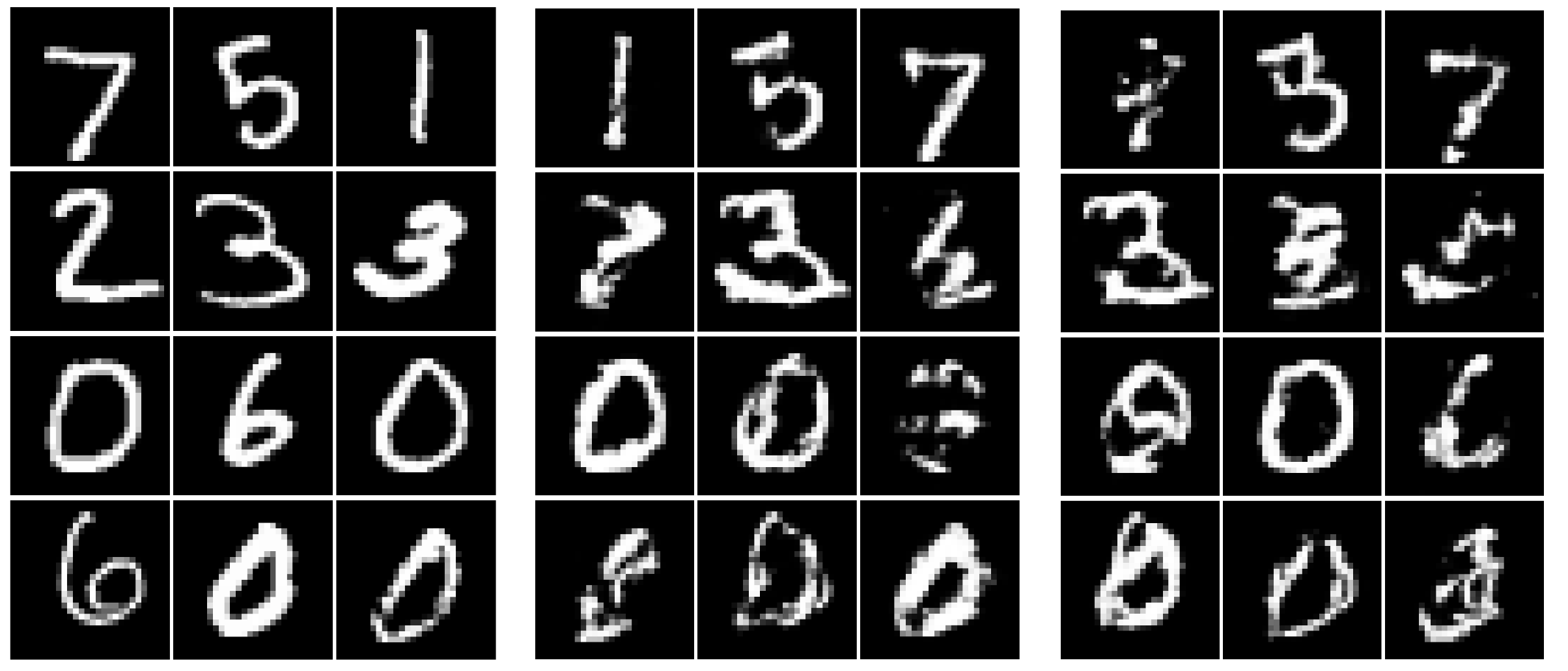

3 Sources: Original - BP - PE

Quantitative Benchmarks

MNIST Dataset (PSNR)

| Method | 2 Sources | 3 Sources |

|---|---|---|

| LASS | 24.23 ± 6.23 | N/A |

| MLASS-PE | 16.87 ± 3.77 | 13.64 ± 1.76 |

| MLASS-BP | 19.30 ± 5.68 | 14.19 ± 2.23 |

SLAKH Dataset (SDR)

| Method | 2 Sources | 3 Sources |

|---|---|---|

| LASS | 5.01 ± 2.39 | N/A |

| MLASS-BP | 3.09 ± 3.23 | -0.44 ± 2.96 |